Along with others I’ve recently started to grok Twitter – it took a while – but I now find it a fantastic way to keep in touch with folk that I know or respect, or catch up on snippets of info from news services around the web. It’s great.

What makes Twitter particularly useful, as a way of keeping in touch with a large number of people, is the limit of 140 characters per ‘tweet’. That’s it, each tweet is 140 character or less. But what this also means is that if you tweet about a URL that URL eats up a lot of those 140 character. To help solve this problem Twitter uses TinyURL to shorten the URL. This is a solution to the problem but unfortunately it also creates a new one.

URLs are important. They are at the very heart of the idea behind Linked Data, the semantic web and Web 2.0 because if you can’t point to a resource on the web then it might as well not exist and this means URLs need to be persistent. But URLs are important because they also tell you about the provenance of the resource and that helps you decide how important or trustworthy a resource is likely to be.

URL shortening service such as TinyURL or RURL are very bad news because they break the web. They don’t provide stable references because they are Single Points of Failure acting as they do as another single level of indirection. URL shortening services then are an anti pattern:

In computer science, anti-patterns are specific repeated practices that appear initially to be beneficial, but ultimately result in bad consequences that outweigh the hoped-for advantages.

URL shortening services create opaque URLs – the ultimate destination of the URL is hidden form the user and software. This might not sound such a big deal – but it does mean that it’s easier to send people to spam or malware sites (which is why Qurl and jtty closed – breaking all their shortened URLs in the process). And that highlights the real problem – they introduce a dependency on a third-party that might go belly up. If that third-party closes down all the URLs using that service break, and because they are opaque you’ve no idea where there originally pointed.

And even if the service doesn’t shut down there would be nothing you could do if that service decided to censor content. For example the Chinese Communist Party might demand that TinyURL remap all the URLs it decided were inappropriate to state propaganda pages. You couldn’t stop them.

But of course we don’t need to evoke such Machiavellian scenarios to still have a problem. URL shortening services have a finite number of available URLs. Some shortening services like RURL use 3 character (e.g. http://rurl.org/lbt), this means these more aggressive RUL shortening services have about 250,000 possible unique three-character short URLs, once they’ve all been used they either need to add more characters to their URLs or start to recycle old one. And once you’ve started to recycle old URLs your karma really will suffer (TinyURL uses 6 characters so this problem will take a lot longer to materialise!)

There is an argument that on services such as Twitter the permanence of the URL isn’t such an issue – after all the whole point of Twitter is to provide a transitory, short lived announcement – Twitter isn’t intended to provide an archive. And the fact that the provenance of the URL is obfuscated maybe doesn’t matter too much either, since you know who posted the link. All that’s true, but it still causes a problem when TinyURL goes down, as it did last November and it also reinforces the anti-pattern and that is bad.

Bottom line, URLs should remain naked, providing this level of indirection is just wrong. The Internet isn’t supposed to work via such intermediate services; the Internet was designed to ensure there wasn’t a single single point of failure that can so easily break such large parts of the web.

Of course simply saying don’t use these URL shortening services isn’t going to work. Especially when using services such as Twitter, where there is a need for short URLs. However, what it does mean is that if you’re designing a website you need to think about URL design and that includes the length of the URL. And if you’re linking to something on a forum, wiki, blog or anything that has permenance please don’t shorten the URL, keep them naked. Thanks.

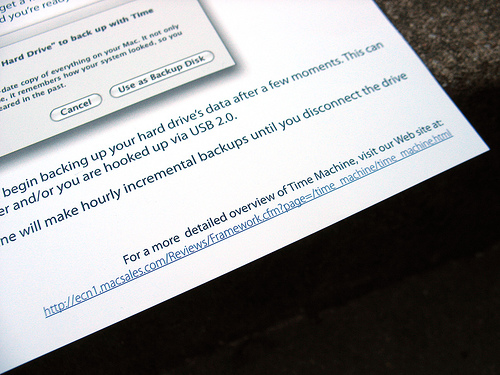

Photo: Example of poor URL design, by Frank Farm. Used under licence.